A growing concern has emerged over the incompatibility of artificial intelligence (AI) systems with data privacy. Recent evidence suggests that AI systems designed to predict user behavior inevitably learn to represent and leverage political opinions and other sensitive data categories. This raises questions about the effectiveness of existing data protection measures, particularly in the wake of the General Data Protection Regulation (GDPR), which prohibits the processing of sensitive personal data. The issue has sparked debate among experts, with some arguing that AI systems and data privacy may be fundamentally incompatible.

The Incompatibility Conundrum

| Event | AI systems may be incompatible with data privacy |

| Date | 2 days ago |

| Location | Global |

| Key People/Organizations involved | Paul Bouchaud, Pedro Ramaciotti |

| Status/Current Situation | Unclear |

| Key Regulation | General Data Protection Regulation (GDPR) |

| Article | Article 9 of GDPR |

| Data Type | Sensitive personal data |

| Data Categories | Political opinions, religious beliefs, sexual orientation, biometric data |

The General Data Protection Regulation, a cornerstone of European data protection law, prohibits the processing of sensitive personal data unless narrow exceptions apply. This regulation was designed for a world where sensitive data had to be actively collected. However, the advent of AI systems has raised questions about whether this framework remains effective. AI systems, designed to predict user behavior, inevitably learn to represent and leverage sensitive data categories, including political opinions and other protected characteristics.

This blurs the boundary between deliberate and inadvertent political profiling. The social media platform X provides a stark illustration of these systems, where algorithmic systems operate along a continuum. At one end, platforms explicitly classify users into political or religious categories; at the other, recommendation algorithms and conversational bots silently encode ideology into user profiles. Between these extremes lie systems that must infer each user’s political position to function at all.

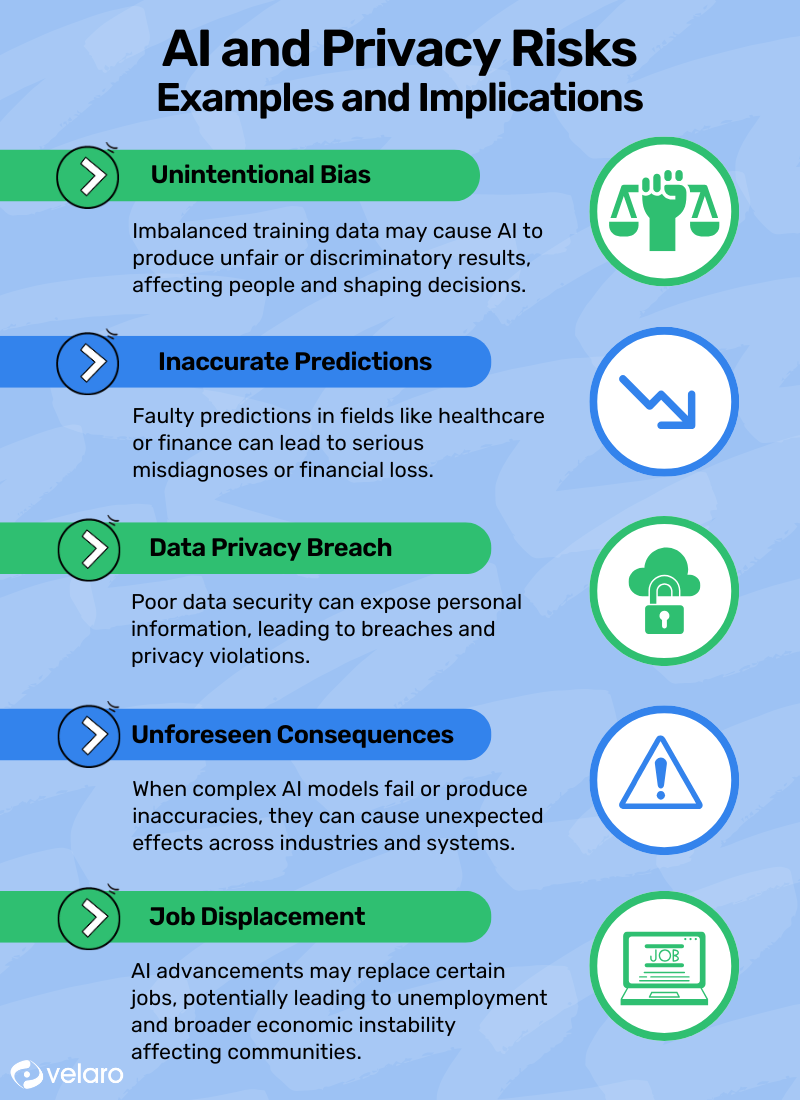

The tension between AI systems and data privacy is a fundamental one. As AI systems become increasingly prevalent, they inevitably learn to represent and leverage sensitive data categories, raising questions about whether the current regulatory framework is sufficient to protect user rights. This issue will be explored in greater depth in the following sections, where we examine the limits of learning, the balance between innovation and privacy, and the ethics of AI development.

The Limits of Learning

Artificial intelligence systems have raised concerns about their compatibility with data privacy. In a world where sensitive personal data is protected, AI systems can infer and exploit this information without explicit consent. This blurs the boundary between deliberate and inadvertent political profiling. Online behavior reflects personal beliefs that powerful AI models can infer and leverage, highlighting a fundamental tension between innovation and data protection.

Recent evidence suggests that AI systems designed to predict user behavior inevitably learn to represent and leverage sensitive data categories. This is because online behavior reflects personal beliefs that sufficiently powerful models can infer and exploit. The social media platform X provides a stark illustration of these systems, exposing a continuum of algorithmic systems that operate within it. These systems range from explicit classification to silent encoding of ideology into user profiles as a byproduct of optimizing for engagement or text processing.

The inference of sensitive characteristics without explicit consent raises questions about the limits of learning in AI systems. As AI becomes increasingly sophisticated, it is essential to explore the boundary between deliberate and inadvertent political profiling. This requires a nuanced understanding of the relationship between AI, data privacy, and innovation, highlighting the need for a balanced approach to the development of AI systems.

Balancing Innovation and Privacy

The rapid advancement of artificial intelligence (AI) systems has raised significant concerns about data privacy. In a world where AI systems can infer sensitive characteristics without explicit consent, the boundaries between deliberate and inadvertent political profiling are becoming increasingly blurred. This issue is not unique to any particular platform, but rather a fundamental tension that arises when AI systems are designed to optimize for engagement or text processing.

The General Data Protection Regulation (GDPR) in Europe prohibits the processing of sensitive personal data, including political opinions and biometric data, unless narrow exceptions apply. However, AI systems often learn to represent and leverage these sensitive data categories as a byproduct of predicting user behavior. This raises questions about the compatibility of AI systems with data privacy regulations.

As AI systems become more pervasive in our daily lives, it is essential to strike a balance between innovation and privacy. The GDPR’s Article 9 serves as a crucial framework for protecting sensitive personal data, but its application in the context of AI systems requires careful consideration. By exploring the boundary between deliberate and inadvertent political profiling, we can better understand the implications of AI systems on data privacy and work towards developing more responsible AI solutions.

The Ethics of AI Development

The Ethics of AI Development

The development of AI systems has raised significant concerns about data privacy. In a world where sensitive personal data is protected by regulations such as the General Data Protection Regulation, AI systems are blurring the boundary between deliberate and inadvertent political profiling. Recent evidence suggests that AI systems designed to predict user behavior inevitably learn to represent and leverage political opinions and other sensitive data categories, even if they are not explicitly asked to do so.

This issue is particularly evident in social media platforms, where algorithms and conversational bots silently encode ideology into user profiles as a byproduct of optimizing for engagement or text processing. Platforms that explicitly classify users into political or religious categories are just one end of a continuum, with recommendation algorithms and conversational bots occupying the other end. The social media platform X provides a stark illustration of these systems, exposing a fundamental tension between AI development and data privacy.

The incompatibility between AI systems and data privacy is a pressing concern that requires careful consideration. As AI systems become increasingly sophisticated, they are learning to infer sensitive characteristics without ever being asked to do so. This raises important questions about the ethics of AI development and the need for greater transparency and accountability in the tech industry. The ability of AI systems to infer sensitive data categories without explicit consent blurs the boundary between deliberate and inadvertent political profiling, highlighting the need for a more nuanced approach to data protection.

The Future of AI and Data Privacy

As AI systems become increasingly sophisticated, concerns are growing about their compatibility with data privacy. The General Data Protection Regulation in Europe, for instance, prohibits the processing of sensitive personal data, including political opinions and religious beliefs, unless narrow exceptions apply. However, AI systems can infer sensitive characteristics without ever being asked to do so, blurring the boundary between deliberate and inadvertent political profiling.

This issue is not unique to any one platform, but rather a fundamental tension inherent in the design of AI systems. Platforms like social media often use algorithmic systems to optimize for engagement or text processing, which can lead to the silent encoding of ideology into user profiles. This raises important questions about the balance between innovation and privacy. As AI systems become more pervasive, it is essential to consider the implications of their design on data privacy.

The European Union’s General Data Protection Regulation was designed for a world in which sensitive data had to be actively collected. However, the rise of AI systems has created new challenges for data protection. The regulation’s Article 9 prohibits the processing of sensitive personal data, but AI systems can infer this information without explicit consent. This highlights the need for a more nuanced approach to data protection in the age of AI.

Call to Action

As the use of AI systems becomes increasingly prevalent, concerns have been raised about their compatibility with data privacy. The processing of sensitive personal data, including political opinions and religious beliefs, is a critical issue that requires attention. Recent evidence suggests that AI systems designed to predict user behavior inevitably learn to represent and leverage sensitive data categories. This blurs the boundary between deliberate and inadvertent political profiling, raising questions about the limits of data protection.

The social media platform X provides a stark illustration of the tension between AI systems and data privacy. The platform’s algorithmic systems expose a fundamental tension: the need to infer user characteristics to function, versus the risk of inadvertently processing sensitive data. This highlights the need for a more nuanced understanding of the relationship between AI systems and data protection. By examining the ways in which AI systems process and represent user data, we can better understand the implications for data privacy.

As we explore the complexities of AI systems and data privacy, it becomes clear that a call to action is necessary. The processing of sensitive data by AI systems requires a reevaluation of our data protection frameworks. By acknowledging the limitations of current regulations and the potential risks of AI systems, we can work towards creating a more balanced approach to innovation and data protection. This will require a collaborative effort from policymakers, industry leaders, and civil society to ensure that AI systems are designed with data privacy in mind.

Source: Tech Policy Press